5

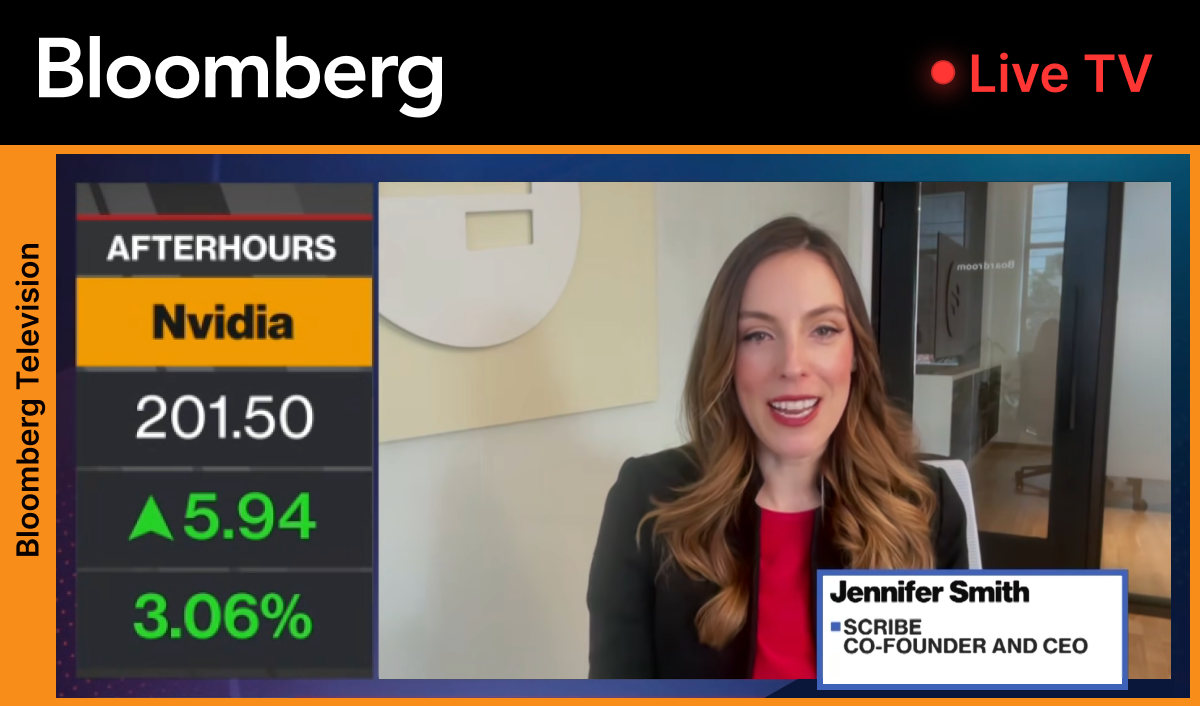

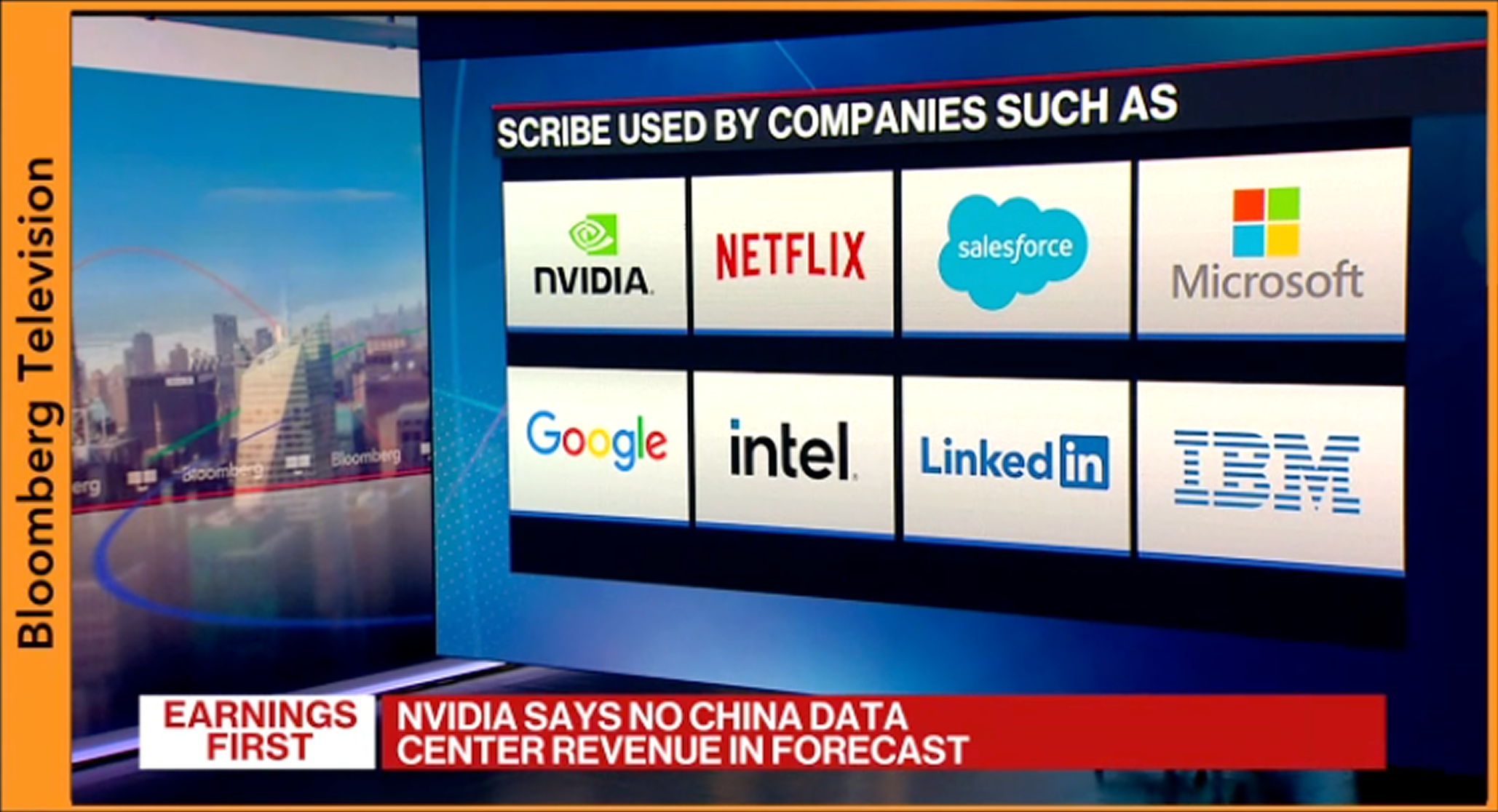

Nvidia released earnings for fiscal year 2026 and Bloomberg had us on to talk about AI and what it means for the future of work.

One theme kept coming up: the infrastructure layer is improving fast. Models are getting more capable. Compute is getting cheaper. Agent adoption is accelerating. But for most enterprises, measurable ROI is still elusive. Not because AI isn’t powerful, but because organizations aren’t set up to use it effectively.

Nearly every enterprise is racing to adopt AI agents. Boards are excited. Budgets are allocated. Pilots launch fast.

But the hard part is: most companies aren’t set up for agents to succeed. This isn’t because the models aren’t good enough, but because the agents don’t have the one ingredient they need to operate inside your business. They need a living, accurate description of how work actually happens across teams and tools. That gap is why so many AI projects stall out before they enable scaled productivity gains.

“Every enterprise is racing to adopt agents, but almost none of them have the basic ingredient they need: a living, accurate description of how their business actually works.” — Jennifer Smith, CEO, Scribe

The uncomfortable truth: most AI spend is still experimentation

Enterprise AI is still largely experimental. Many teams are using AI tools at the individual or small-team level, but that doesn’t automatically translate into measurable ROI for the organization.

The data backs it up:

- MIT reports that the 95% of enterprise AI pilots aren’t delivering ROI — a “GenAI Divide” where only a small fraction see real returns.

- Gartner predicts over 40% of agentic AI projects will be canceled by the end of 2027 due to unclear business value, escalating costs, or inadequate risk controls.

- McKinsey reports only 1% of leaders consider their companies “mature” in AI deployment (fully integrated into workflows and driving substantial business outcomes).

This isn’t a “your team isn’t trying hard enough” problem. It’s a context problem.

Models are generic. Your business is not.

Foundation models are incredibly powerful, but they’re trained on generalized data. They can generate smart answers in the abstract. But enterprises don’t run on abstractions. They run on workflows: handoffs, approvals, exceptions, dependencies, and the unwritten “how we do things here.” The real unlock is feeding them the specific workflows, IP, and know-how that make your company different from anyone else.

Most companies don’t have a single place they can hand to an agent and say: “This is what we do, and this is how work actually gets done.”

So what happens instead? Agents get dropped into partial context — some docs, some tickets, maybe a knowledge base — and they’re expected to “figure it out.” In real-world conditions, that’s when they fail: not because they’re dumb, but because they’re missing the operational reality that turns intelligence into execution.

“General AI can give you smart answers in the abstract. Context is what turns that intelligence into something that can operate inside your organization, on your real workflows.” — Jennifer Smith, CEO, Scribe

What “workflow context” actually means

When we say “context,” we don’t mean more documentation for documentation’s sake. We mean usable operational context — the kind that lets a system understand and act:

- The real steps people take across tools (not just the ideal process)

- Decision points and “it depends” rules

- Prerequisites, dependencies, and handoffs between teams

- Definitions of done and quality checks

- The outcomes you’re optimizing for (time, cost, quality, risk)

This is why the small fraction of successful AI deployments tend to look similar: they’re deeply integrated into real workflows, not bolted on top of them.

The strategic question for enterprises

Enterprise leaders are asking the same question right now:

How do we turn what our company uniquely knows — its workflows, processes, and institutional know-how — into structured context that AI agents can actually use?

That’s the bridge between:

- Flashy demos → durable ROI

- Individual productivity → org-wide efficiency

- Experiments → systems that compound

And this is where the opportunity is getting bigger, not smaller. Models and infrastructure keep improving and getting cheaper — so the differentiator is increasingly your context layer and how quickly you can make it usable.

The path forward: move up the Context Maturity Curve

The companies making real progress aren’t running more pilots. They’re building the missing layer: a living map of how work actually gets done, then using it to deploy AI safely and measurably across workflows.

That’s exactly what the Context Maturity Curve is for: to help you identify where you are today, what’s missing, and the most practical next step to unlock more AI autonomy and ROI.

If you want a clear starting point: take the diagnostic assessment, get your scorecard, and use it to align leaders and teams on what to capture next — so your next “agent” deployment is set up to succeed.